Mar 19, 2026

Why 99% of People Are Using AI Wrong

An Interview with Drew Bent, Anthropic's Head of Education

Founder Focused

Drew Bent has spent his entire career trying to solve one problem: how do you give every student access to a great tutor? He tutored peers in high school, founded a nonprofit, and became a high school math teacher. Each step brought him closer. None succeeded at scale.

Now, as Head of Education at Anthropic, he states that people aren't failing to use AI because of the tools they choose. They’re failing because of how they think about them. For many, AI still resembles an upgraded search engine: query, get an answer, move on. That approach is already outdated.

Bent calls them “AI natives”: users who treat these systems not as tools, but as collaborators. They provide more context, ask better questions, and get fundamentally different results. Using AI well isn’t just technical anymore. It’s a social skill.

In this interview, Bent shows how to stay ahead or risk falling behind.

Watch the full interview now on EO's YouTube channel! Below is the complete transcription of the interview. Minor edits have been made for clarity and readability.

Key Highlights:

"Think like an AI native." It's the core message from Drew Bent, Head of Education at Anthropic and it might be the most important mindset shift of our generation.

Bent has spent his career obsessing over one question: how do we scale world-class tutoring to everyone, everywhere? From founding a tutoring nonprofit to teaching high school math, he's now at the frontier of AI-powered education at Anthropic, the company behind Claude.

In this interview, Bent breaks down why most people dramatically underestimate today's AI capabilities, what a landmark Anthropic study revealed about AI and learning outcomes, and why working with AI is no longer a technical skill, it's a social one.

The AI Native Mindset

How do AI-native people think differently about these tools compared to those who started using AI back in 2022?

Drew Bent: One of the things that I don't think the AI native people fall trapped to is that the AI native people understand what the capabilities are like today and they treat the AI in that very powerful way. Versus someone who was using AI tools in 2022 — they sort of see it still as like an assistant. Maybe they're misjudging the capabilities.

And so what we all need to do is we need to sort of think like the AI native person, someone who just grew up using AI from day one. People often are saying, "Okay, what's the step-by-step playbook of how to do things?" That made sense last year. And I think as we're looking to where the technology is now, what we really need is to give more of this context to the AI and then let it do its thing.

And so what we all need to do is we need to sort of think like the AI native person, someone who just grew up using AI from day one. People often are saying, "Okay, what's the step-by-step playbook of how to do things?" That made sense last year. And I think as we're looking to where the technology is now, what we really need is to give more of this context to the AI and then let it do its thing.

Is working with AI a technical skill or something else entirely?

Drew Bent: In some ways, it is a social skill. It's not just a technical skill. Sure, early days of AI was how do you prompt it this way, but ultimately you have to treat this more as a colleague, as a collaborator. And so then it becomes more like a social skill.

Could you please introduce yourself?

Drew Bent: My name is Drew Bent. I lead education at Anthropic. My whole career has been focused on tutoring. My parents are educators. I grew up just loving learning and loving peer tutoring people at my school. Later I founded a tutoring nonprofit, became a high school math teacher, and now I'm working on AI tutors, trying to figure out what does great tutoring look like, and more importantly, how do we scale that up. A world-class education to everyone everywhere. It was worth fighting for, but to do it at scale was always going to be the challenge. But now, finally with AI, we're finally able to take something that was previously only available if you had that amount of money and now bring it to the whole world.

Lesson 1: Don't Underestimate AI's Capabilities

What's the most common mistake people make when assigning work to AI tools?

Drew Bent: One of the things that I think holds us all back is we give AI tools pretty simple problems when we could be giving them much more complex problems. That's the thing that I've struggled with where you kind of know the AI models of last year. And so you sort of give it a problem that was like the hardest type of problem that AI tool could have done last year. But then when I look at my colleagues, they're doing all sorts of powerful things with AI tools, things I didn't even know were possible.And so a lot of this is just kind of seeing what others are doing, being able to open up your eyes to what the current AI models are able to do today, and then lots of experimentation. Ultimately, you're going to need to feel it yourself. You're going to need to be the one to go in and try it out. But what that means is you're constantly going to need to be raising your ambition of the type of problems. You are probably handholding these AI tools originally, but how can you start to give it more latitude to actually make more judgement calls?

What advantage do AI-native users in places like Rwanda and India have over more experienced users?

Drew Bent: I've spent time in places like Rwanda and India where people are AI native; some of the first technology they've been using outside of cell phones and WhatsApp are AI tools. One of the things that I don't think the AI native people fall trapped to is that they understand what the capabilities are like today and treat the AI in that very powerful way versus someone who was using AI tools in 2022, they see it still as an assistant. Maybe they're misjudging the capabilities. Someone who may have never played with this technology before in some ways has the upper hand because they can kind of see what the technology is capable of today and where it's headed. And so what we all need to do is think like the AI native person and ask: what are the models capable of today and what will they be capable of tomorrow?

Why should we constantly raise our ambitions with what we ask AI to do?

Drew Bent: We as humans are very used to linear or static things. It's like you have a colleague and every day you come in to work, they're just getting exponentially better. We don't have a way to think about that. So you're always treating your colleague like they had the capabilities from last month, but actually they could have doubled in the past month. That's a really hard thing to grok.You need to elevate your ambition with what is possible with these tools and always be pushing yourself to the limits, trying things that are not quite possible in today's AI models. Because then when the next model comes out, you try that same thing and you're at the cutting edge of something that no one else has figured out they could do.Those who are really good at using AI tools are treating it like something that has gone from an assistant to a collaborator. And eventually, looking ahead, there will be in some cases an inversion of control where the AI model is doing some of the highest level strategic thinking and then delegating to the human for areas that require human taste and human agency.

Lesson 2: How to Be a Top 1% Learner with AI

What did Anthropic's study on AI and coding education reveal about learning outcomes?

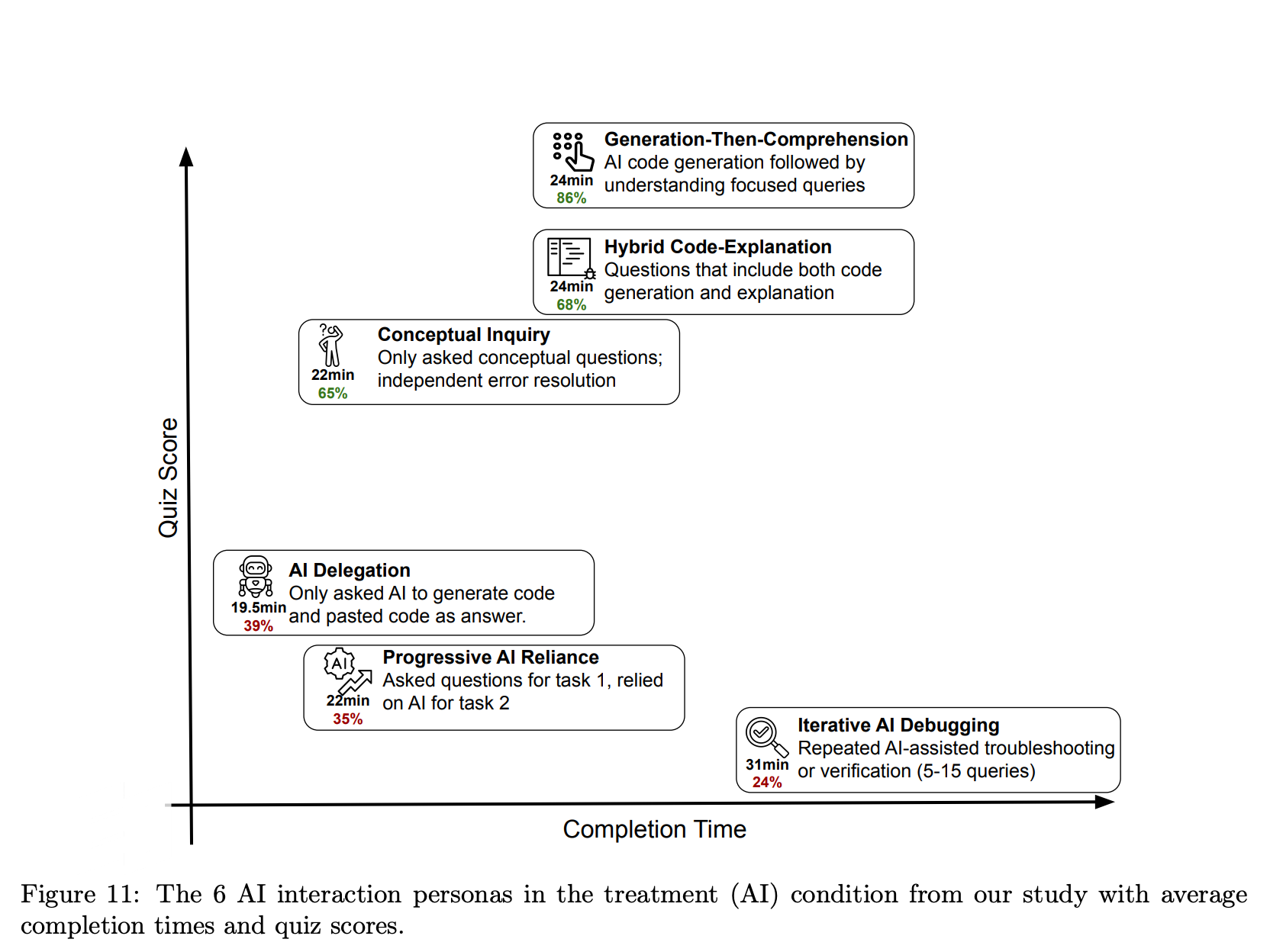

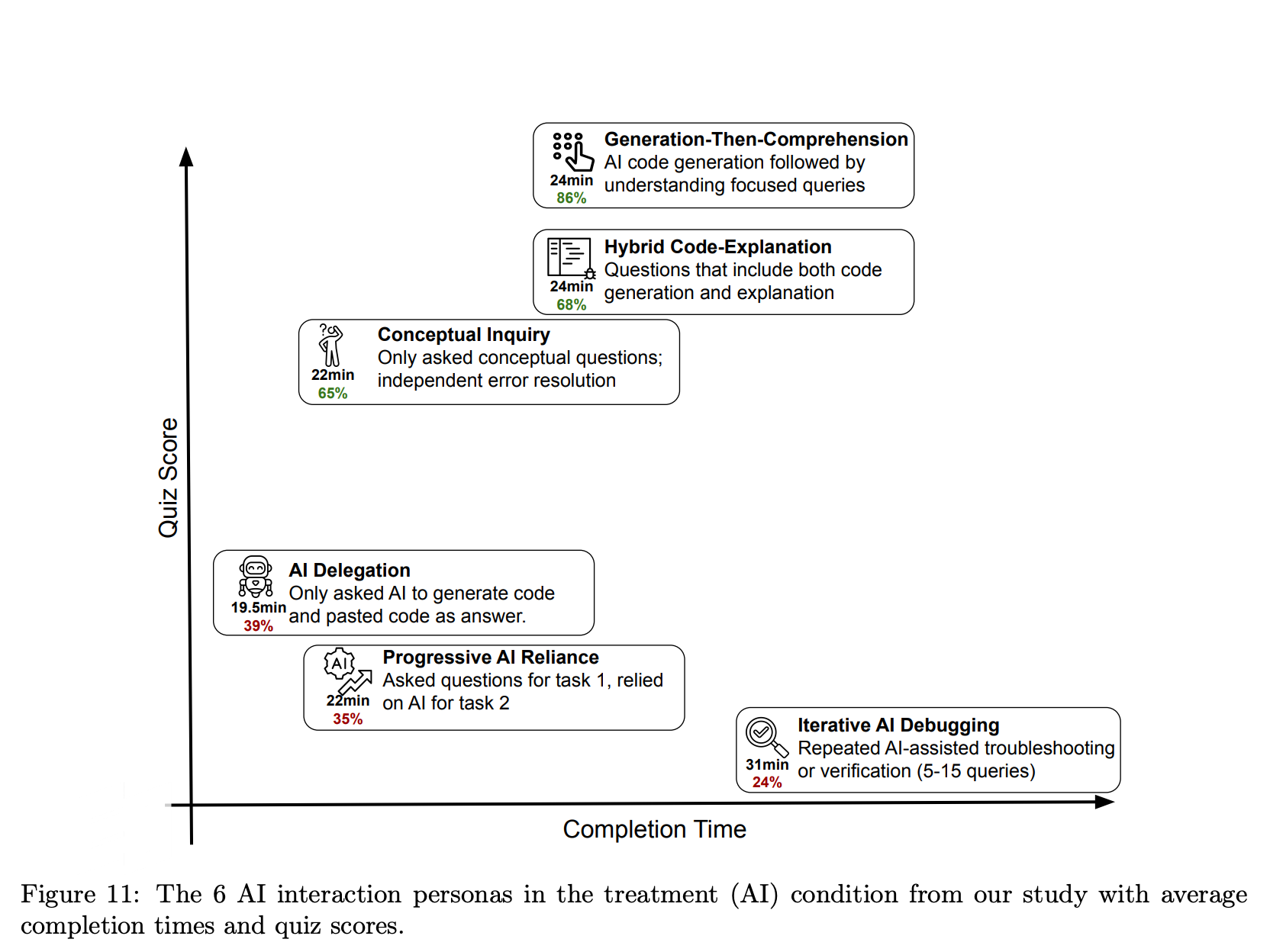

Drew Bent: There was a study that Anthropic did recently where they looked at coding education specifically. They created two groups; both had to do a coding assignment. One could use AI tools, one couldn't. As you might expect, the group that used AI tools finished the assignment much quicker.But then they had a separate assessment afterwards where neither group could use AI, to assess their understanding of the concepts. What they found is that the group that didn't use AI tools actually performed 17% better. They understood the concepts better because they had slogged through the work without AI and really had to internalize it.That's the warning for AI and education. The more we use these tools, the more it raises questions of skill atrophy.

Did all AI users perform worse, or does how you use AI make a difference?

Drew Bent: Not all of them did worse. The ones that were using AI tools not in a transactional way but more in an inquiry way, probing and asking questions, actually performed well on the final assessment. How you use the AI tools really matters. It's not just that you're using them or not using them. It's the way in which you're engaging.What is your goal when you show up to this AI tool? Are you just trying to get the task done as quickly as possible? Or are you really trying to do the task, but also get better and smarter as you're doing it?

What's the right way to approach an AI tool? With a solution in mind or a problem?

Drew Bent: People often go to the AI tool with a particular solution in mind and ask a very particular question because they're trying to get that solution. But really the best practice is probably to come with the problem, not the solution. Say, "This is the thing I'm wrestling with."When you come into an AI with a particular solution, it's going to give a very narrow answer around that. Versus if you come with a much more open-ended problem, the AI models of today are actually pretty good at helping you wrestle with it. In 2026, we can come with a hairier problem and really try to get that input from the AI tool.

Imagine 10x Beyond the Chatbot

Is the AI chatbot the final form factor for learning?

Drew Bent: One misconception out there is that all this learning is going to take place in the form factor of an AI chatbot. Ultimately, we need to think 10x about what those formats and what this media looks like in which you're engaging with an AI. I don't think we've quite cracked it in 2026.

Take Claude Code — Anthropic's coding agent. It was not meant for learning. And yet we've seen people use it to learn in very creative ways. Not just coding, but learning a new language, learning an economics concept. They'll go into Claude Code and start to build memory and context about what they're learning, about who they are, about how they learn best. So it ultimately becomes a coach.

Take Claude Code — Anthropic's coding agent. It was not meant for learning. And yet we've seen people use it to learn in very creative ways. Not just coding, but learning a new language, learning an economics concept. They'll go into Claude Code and start to build memory and context about what they're learning, about who they are, about how they learn best. So it ultimately becomes a coach.

What is the biggest gap between people who are excellent at using AI versus those who aren't?

Drew Bent: AIs have become quite powerful as we all know, but of course they're only as powerful as the context you give them. This is probably the biggest gap when I look at people who are excellent at using AI tools versus not.If you give the AI enough context, it can actually usually do pretty well with most types of tasks. So when I go to the AI tool, I actually spend most of my time before I even ask my question throwing in all the context I can about other documents I've written, the company I work at, the questions I'm thinking about. Maybe I'll even throw in a stream of consciousness of thinking I've done around this topic. The AI models can take in a lot of context and bring it all together. But what they're not going to be able to do is with very little context just reason their way through the world, because they won't understand how you're thinking about it.

Lesson 3: The 2030 Classroom Is Nothing Like You'd Expect

What did Anthropic discover when they looked at how people were actually using Claude for education?

Drew Bent: About a year ago we looked into the data around how people were using the tool. We knew people were using Anthropic for coding and software development. But what was surprising was that one of the top use cases was also educators and students using it. When we looked further into the data, we were a little dismayed around how people were using it. Often it was being used as a crutch, very transactionally, students just going in and asking for answers to their homework. And those are the things that honestly keep me up at night.

How Do We Scale Up Truly Personalized Learning?

What does truly personalized learning mean and what role should AI play in the classroom?

Drew Bent: There's been a lot of research showing that one-on-one tutoring can be one of the most effective ways to learn. That's why the elusive dream in AI and education has been how do we scale up truly personalized learning. But there's a little more than just that. We want learning that's personalized, but you also hear it in the word personalized: we want learning that is personal. And ultimately that's human-to-human interaction.You go to school not just to learn a particular algebra concept or some historical figure. You go to also learn how to interact with your fellow citizens, your colleagues, your friends. So when we think about the role of AI in education, the question is: how can we have AI further the human-to-human connections in the classroom, putting humans at the center?

What do you hope a classroom in 2030 looks like?

Drew Bent: What I would love to see if you go into a classroom in 2030 is you walk in and you don't even see the technology. It's operating behind the scenes. Maybe teachers are using it to save time and build personalized lesson plans and group students, and it's just a rich learning environment. Something that you may see right now if you go into a private school. You have the resources to build this type of environment. But you bring that type of education to every school.I'm in a WhatsApp group with teachers across the world, in a bunch of different countries, and every morning I wake up I'll see new messages from these teachers sharing how they built custom tools with AI's like Claude. Not just custom lesson plans. These are teachers building flashcard apps for their students, formative assessments. They can just every morning wake up, come up with an idea, build the tool with Claude or Claude Code or Claude Artifacts, and deploy it in class that same day. It builds a much richer learning environment.

What will AI learning tools know about students by 2030?

Drew Bent: These AI tools are going to need to know a lot about your context. They're not going to be using some generic curriculum. They're going to know the context of your school's curriculum, your state curriculum, and be able to tie everything back to that. That's one: they know more about your context.Two, they're going to know more about you. I was a teacher before, and it would have been very disappointing to all my students if every time I walked into the classroom I said, "Oh yeah, remind me what are you working on and what do you know?" And that's the type of interaction we're having right now in 2026. Where we have to head is a world where this is a real learning companion that understands where you're coming from, and you get to choose to give it this context so that you're growing with it and the AI tools are also growing with you.

Technology Will Be Invisible in the Future Classroom

Tell us about Schoolhouse. What is it and why was it built?

Drew Bent: I've known Sal Khan since I was a high schooler when I first interned at Khan Academy. Before he created Khan Academy, he was doing one-on-one tutoring with his cousins over Skype. His initial vision was always: how can I scale this type of tutoring to more people?In 2020 we started to wonder how else we could recreate that one-on-one tutoring experience and make it available to everyone. That's why we built Schoolhouse, a peer-to-peer tutoring platform where anyone in the world can log on and receive free peer-to-peer tutoring in subjects like math, science, and test prep, and if you want to give back, you can go volunteer as a tutor.

What did Schoolhouse reveal about global learning communities?

Drew Bent: What we noticed with Schoolhouse is that if we create a global learning community, there's actually more shared elements than you may initially think. I've run tutoring sessions on Schoolhouse where I have someone from Russia, someone from Colombia, someone from the US, and someone from China all in the same Zoom call learning the same topics. And then students will start to share: "In my school, we learn it this way. In my school, we learn it that way."There's been something kind of magical where you can actually bring people together. The world feels a lot smaller. You have these high schoolers learning together and forming connections that would previously not have been possible. To actually bring them into the same room to have them learn together, I think that's just incredible. AI tutoring is a part of it and we're going to have amazing AI tutors. At the same time, there's a personal dynamic to it. Having someone who cares about your progress, who holds you accountable, is equally important, if not more important.

Why is collaborating with AI a social skill, not just a technical one?

Drew Bent: We have essentially birthed a new species of artificial intelligence into the world. When I think about my own education experience, I went through 15-plus years of school learning how to interact with other humans. But now we're entering a world where we have another type of being, artificial intelligence, which is going to be in our workplaces as a colleague. You're going to just see it show up in Slack one day as a co-worker. It's going to be showing up in schools.This is a whole different type of skill: how do you not just interact with other humans productively, but how do you collaborate with an AI? And in some ways it is a social skill. It's not just a technical skill. Sure, early days of AI was how do you prompt it this and that way. But I think that era is over. We're done with the days of just technical prompting. Ultimately you have to treat this more as a colleague, as a collaborator. It becomes about what is the way in which I can interact with an AI so that it understands more about what I'm looking for, and I understand more about how the AI works. That's a type of dialogue similar to how we build social skills with other humans.

How does someone get better at working with AI?

Drew Bent: That's why I keep going back to this idea of practice and reps. You just spend time with an AI, start treating it more like a colleague, interacting with it, understanding its limitations today and its capabilities. But that's going to come through practice and repetitions.

Explore more